Building Cost-Effective Agent Systems

Analyzing the success rates and the hidden tax of agentic AI.

Your AI agent bill just tripled last month. You're not alone. While token prices have dropped over 99% since 2023, overall AI spending keeps climbing (Jevons Paradox strikes again!). The culprit isn't the price per token. It's how many tokens your architecture burns to get anything done.

This is the hidden tax of agentic AI. You thought you were building cost-efficient automation. Instead, you built a token incinerator.

Here's what the numbers actually tell us. Token usage drives 70% of AI agent expenses, with moderate deployments consuming 5 to 10 million tokens monthly at a cost of $1,000 to $5,000 according to Azilen. But that's just the API bill. The real damage happens when you scale.

A developer shared their experience on Reddit ("Spent 9.5B OpenAI tokens in January") revealing they burned through 9.5 billion tokens in January 2025. After analyzing usage patterns, optimizing prompts, and switching to GPT-4o-mini with caching enabled, they achieved a 70% reduction in output tokens and a 40% overall cost decrease according to a case study by Evalics.

That's the difference between a sustainable business and a cash bonfire.

Why Your Token Math Is Wrong

Most teams calculate costs like this: take the model price per million tokens, multiply by expected volume, done. That math works for simple API calls. It fails spectacularly for agentic workflows.

Your demo chatbot says "Your bill is $X" using 200 tokens. Your production bot thinks through payment history, customer tier, previous complaints, billing system verification, tone guidelines, then provides the same answer. Same output, 26,200 tokens. Your cost model just broke.

According to Dataiku's analysis "The Agentic AI Cost Iceberg," production AI systems can use 6x the tokens of demo systems for the same answer, with the 200-token demo response becoming a 1,200-token production interaction. This token inflation isn't a bug. It's the cost of reliability.

The problem compounds with agentic workflows. Models like o3, DeepSeek R1, Grok 4, and Kimi K2 introduced multi-step processes that caused token consumption per task to jump 10x to 100x since December 2023 according to Adam Holter's analysis "AI Costs in 2025: Cheaper Tokens, Pricier Workflows."

You're not paying for answers anymore. You're paying for thinking.

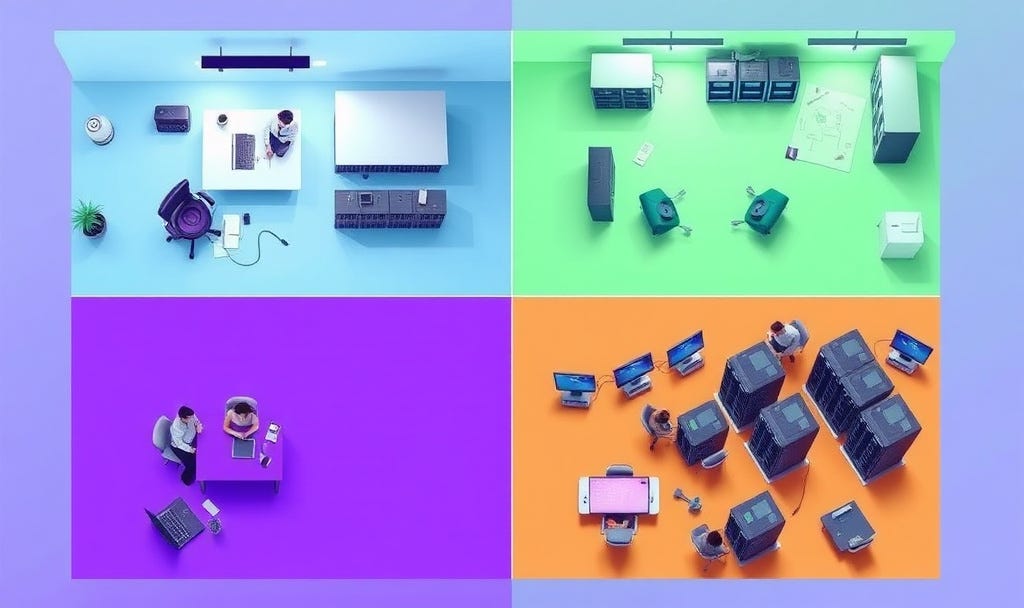

Single-Agent vs. Multi-Agent Economics

Here's where architecture decisions get expensive. A single-agent system handles everything through one model. Simple, predictable, cheap. A multi-agent system splits work across specialized agents. Complex, powerful, potentially ruinous.

The math seems straightforward. According to Khushbu Shah's analysis "Single Agent vs Multi Agent in AI: What Your Project Hinges On" published on ProjectPro, single-agent systems scale costs linearly with task complexity, making them cost-efficient for startups and projects with narrow scopes. But that linear scaling hits a wall fast.

Try building customer support with a single agent. It handles FAQs fine. Then someone asks about returns for a damaged product bought with a promotion that expired. Your single agent needs the full context of your return policy, promotion terms, inventory system, and customer history. Every query burns thousands of tokens retrieving information it might not need.

Multi-agent systems fix this through specialization. One agent handles returns, another manages promotions, a third checks inventory. Each carries less context. You can lower costs by using cheaper models for most agents and reserving expensive ones for important tasks.

BNY Mellon deployed a multi-agent system called Eliza where 13 specialized agents handle financial workflows autonomously. According to VentureBeat's analysis "How big U.S. bank BNY manages armies of AI agents," these agents range from client agents to segment agents, and they "negotiate with each other" to determine product recommendations based on marketing segments. Each agent performs domain-specific tasks like document parsing or knowledge retrieval. The result? Better efficiency and scalability than dumping everything into one massive context window.

But multi-agent systems aren't free money. They require orchestration, monitoring, and careful design. Development costs are higher. Maintenance is harder. You're trading API costs for engineering time.

Here's the decision framework. Use single agents when you're prototyping, handling narrow tasks, or operating with limited engineering resources. Switch to multi-agent when domain specialization matters, cross-functional coordination is required, or future scalability justifies the upfront investment.

The Token Efficiency Multiplier

Retrieval-Augmented Generation fundamentally changes the economics. Instead of stuffing your entire knowledge base into every prompt, you fetch only relevant information.

The token savings are large.

Traditional RAG splits documents by token count. Every 1,000 tokens becomes a chunk. This is simple and terrible. It breaks context mid-sentence, duplicates information across chunks, and forces you to retrieve more pieces to get complete answers.

Context-aware RAG fixes this. According to Microsoft's technical article "Context-Aware RAG System with Azure AI Search to Cut Token Costs and Boost Accuracy," their implementation using Azure AI Search achieved an 80 to 85% reduction in token usage while improving both accuracy and response speed. Instead of fixed-size chunks, it creates semantically complete segments. Your retriever pulls fewer pieces. Your LLM processes less noise.

The savings scale brutally. A Cambridge University study titled "Maximizing RAG efficiency: A comparative analysis of RAG methods" found that different RAG methods produced dramatically different results. The Refine method resulted in an 18.6% reduction in token usage, while the Reciprocal method demonstrated a 12.5% reduction. Your choice of RAG architecture isn't a detail. It's a line item.

But RAG introduces its own costs. You’re paying for embeddings, vector storage, and retrieval operations. OpenAI charges around $0.10 per million tokens for embeddings, while Google Gemini charges $0.15 per million input tokens according to Net Solutions. Those costs accumulate silently.

The break-even calculation matters. If you’re answering one-off questions with constantly changing data, RAG might cost more than just using a larger context window. If you’re handling thousands of queries against the same knowledge base, RAG crushes the competition.

The Hidden Multiplier: Reasoning Tokens

Here's what most teams miss. Newer models like GPT-o1, DeepSeek R1, and Claude Opus don't just generate answers. They generate reasoning. Those thinking tokens are billed as output tokens, which cost 2x to 5x more than input tokens.

Grok 4 costs $3 per million input tokens and $15 per million output tokens. Looks reasonable. Turn on reasoning and it can effectively cost 3x Sonnet with thinking enabled, and nearly 15x without according to cost analysis. Your "efficient" model choice just became your most expensive one.

This is the 2026 cost trap. Reasoning models are better. They handle complex tasks with fewer retries. They need less hand-holding. But they burn tokens like crazy to do their thinking. If you don't need the reasoning capability, you're subsidizing intelligence you don't use.

The optimization here is rough as well. Treat reasoning settings like a spending dial. Use reasoning for complex decisions, planning, and multi-step workflows. Turn it off for simple queries, data formatting, and straightforward transformations. Your bill will thank you.

Practical Success Metrics That Actually Matter

Forget cost per token. That number means nothing without context. What matters is cost per successful outcome. Did the agent complete the task? Did it require human intervention? How many tokens did it burn getting there?

For example, a financial services firm tracks "cost per resolved ticket." Their single-agent support bot costs $0.15 per interaction. Their multi-agent system costs $0.32. But the multi-agent system resolves 40% more tickets without escalation. Cost per successful resolution? Multi-agent wins.

E-commerce companies measure differently. They track cost per completed order, cost per product recommendation that converts, cost per abandoned cart recovered. Dahbahm Digital Media, a digital agency specializing in AI automation, deployed AgentiveAIQ agents across 15 client stores. According to their case study, they achieved a 73% drop in tier-1 support tickets and recovered 25% of abandoned carts within weeks. The token costs became irrelevant.

Your metrics should reflect your business model. B2B SaaS platforms might track cost per qualified lead generated. Customer support operations measure cost per ticket resolved. Internal tools calculate cost per automation completed. Pick the metric that ties to revenue or savings.

Then optimize ruthlessly. Profile your token usage. Find the expensive operations. Replace GPT-4 with GPT-3.5 for simple tasks. Implement caching for repeated queries. Use RAG instead of long contexts. Switch to cheaper models for non-critical paths.

The Architecture Decision Tree

You can't build cost-effective agent systems without a framework. Here's how to think through the choices.

Start with scope. Is this a narrow, well-defined task? Single agent, simple tools, minimal context. Is it a complex workflow spanning multiple domains? Multi-agent with specialized roles and orchestration.

Consider data characteristics. Static knowledge base that rarely changes? Long context windows might work. Dynamic data that updates constantly? RAG architecture. Massive corpus that never changes?

Fine-tuning might be cheaper long-term.

Evaluate query patterns. One-off questions from users? Pay per query with stateless agents. Ongoing conversations with memory? State management costs matter. Batch processing?

Optimize for throughput over latency.

Look at scale. Thousands of queries per day? Caching and optimization are mandatory. Millions? You need dedicated infrastructure and cost monitoring. Billions? You're building custom solutions and negotiating enterprise pricing.

Factor in engineering capacity. Small team? Simple single-agent architecture with managed services. Experienced team? Multi-agent with custom orchestration. Limited ML experience?

Stick to frameworks and pre-built tools.

The Economic Reality

Token prices keep falling. A 2025 Berkeley model reproduced a similar model to one that cost an estimated $100 million for only $30 of compute according to Monetizely. That's a 99.99% cost reduction. You'd think AI would be getting cheaper.

It's not. Because we're using AI differently. We went from simple completions to multi-step reasoning. From static prompts to dynamic agent workflows. From dozens of tokens to thousands per task. The price per token dropped. The tokens per task exploded.

This creates a weird dynamic. The companies winning on costs aren't the ones using the cheapest models. They're the ones building architectures that minimize wasted tokens. They cache aggressively. They route intelligently. They measure obsessively.

The lesson is clear. In 2026, architectural choices matter more than model prices. You can use the most expensive model and still come out ahead if your architecture is efficient. Or you can use the cheapest model and go bankrupt if you're burning millions of wasted tokens.

Build for Efficiency, Not Just Intelligence

The smartest agent isn’t always the most profitable one. Sometimes the dumbest solution that works is the one that scales.

Consider classification tasks. You could use GPT-4.5 to categorize customer emails. It’ll be incredibly accurate. It’ll also cost you $0.07 per 1,000 tokens. Or you could use a fine-tuned BERT model that costs effectively nothing after training. It’ll be 95% as accurate and 100x cheaper.

The same logic applies to agent architectures. You could build a sophisticated multi-agent system with planning, reflection, and self-correction. It'll handle edge cases beautifully. It'll also burn tokens on every interaction. Or you could build a simple single-agent system with good error handling. It'll cover 80% of cases for 20% of the cost.

This isn't about dumbing down your AI. It's about right-sizing intelligence to the problem. Use expensive reasoning for expensive problems. Use cheap classification for cheap problems.

Don't bring an Opus to a Haiku fight.

The developers who master this will build sustainable AI agentic systems. The ones who chase capabilities without watching costs will build expensive science experiments.

Your token budget is your product strategy. Spend it wisely.