What Makes a Task "Agentic"?

Let's cut through the hype and examine what separates genuinely agentic tasks from everything else.

Everyone's talking about agentic AI. The term shows up in pitch decks, product launches, quarterly planning meetings and LinkedIn posts about the future of work. But most discussions skip past a basic question: what actually makes a task "agentic" in the first place?

Not every workflow benefits from an agent. Some tasks need simple automation. Others require human judgment from start to finish. The sweet spot for agentic systems sits somewhere between these extremes, where three specific characteristics converge.

Building Moltin has taught me to ignore the noise. LinkedIn posts fade, but architectural requirements don't. So let's cut through the hype and examine what separates genuinely agentic tasks from everything else.

Sustained Multi-Step Interaction

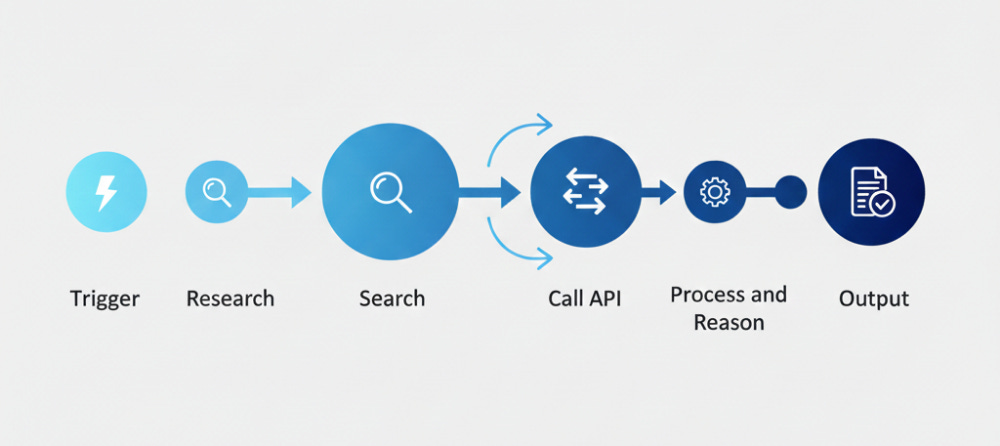

True agentic tasks can't be solved in a single pass. They require multiple actions, decisions, and responses over time. Think about how you'd book a complex business trip. You check flights, compare prices, verify hotel availability, adjust for meeting schedules, and revisit earlier choices when conflicts emerge.

Each step informs the next one. The system can't just execute a predetermined sequence and call it done. It needs to maintain context across interactions. What happened in step three matters when you're on step seven.

This is different from a simple API call. It's different from running a script. The task has depth. It unfolds. A traditional automation might handle one piece of this puzzle, but an agent manages the entire progression.

The duration matters too. Some agentic tasks take minutes. Others span hours or days. The key isn't the time itself but whether the system can pick up where it left off. Can it remember what it learned? Can it reference earlier decisions?

Consider customer support. A single FAQ answer isn't agentic. But diagnosing a complex technical issue over multiple messages, gathering information from different sources, trying solutions, and adapting based on results? That's sustained interaction.

The system needs memory. Not just logs, but working memory that shapes ongoing behavior. When a person says "that didn't work", the agent should know what "that" refers to without asking you to repeat the entire history.

Partial Observability

Agentic tasks operate in environments where the system can't see everything at once. Information is hidden, incomplete, or emerges only through interaction. You're solving a puzzle without seeing all the pieces.

A chess engine doesn't face partial observability. It sees the entire board. Every piece, every legal move. That's full observability, even if the problem is computationally hard. An agent booking your travel? It doesn't know your actual preferences until it asks. It can't see your calendar conflicts until it checks. Flight prices change while it's thinking.

This creates genuine uncertainty. The agent must act without complete information. It forms hypotheses, tests them, and updates its understanding. Sometimes it guesses wrong. That's not a bug, it's the nature of the problem.

Think about competitive intelligence research. You're trying to understand a competitor's strategy, but you can only see public information. Press releases. Job postings. Product updates. Each data point gives you a glimpse, never the full picture. An agent tackling this task must synthesize fragments into a coherent view.

Medical diagnosis works the same way. Symptoms are clues, not answers. Test results narrow the possibilities but rarely offer certainty. A diagnostic agent orders tests based on probabilities, interprets results in context, and adjusts its thinking as new information arrives.

The challenge compounds when the environment changes during execution. Markets shift. Servers go down. People change their minds. Your agent started with incomplete information, and now that incomplete information is also outdated.

Welcome to the real world.

Partial observability forces agents to be strategic about information gathering. What should I check first? Which questions will reduce uncertainty the most? There's a cost to every query, whether that's time, money, or cognitive load on a human in the loop.

Adaptive Strategy Refinement

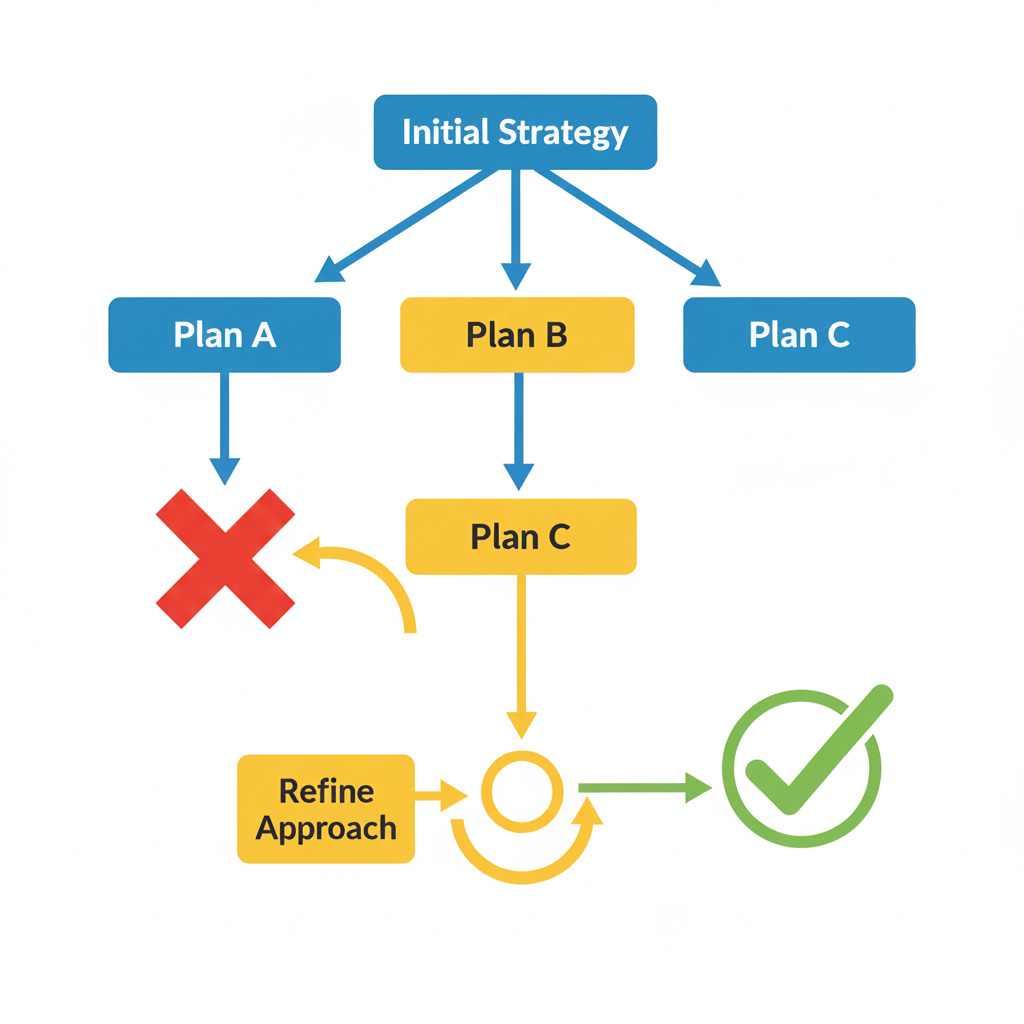

The final piece is adaptation. Agentic tasks don't have a fixed solution path. The approach must evolve based on what the agent discovers along the way. Plan A fails, so you try Plan B. Then you realize Plan C would've been smarter from the start.

This goes beyond simple branching logic. If-then statements don't cut it. The agent needs to reason about which strategies will work in the current context. It adjusts not just its actions but its entire approach to the problem.

Consider content moderation at scale. You can't write rules for every edge case. The agent must learn patterns, recognize when those patterns break down, and develop new approaches. A technique that worked yesterday might fail today because bad actors adapted. Your agent needs to adapt faster.

Software debugging is another clear example. You don't know where the bug is, so you form a hypothesis. You add logging, run tests, examine outputs. Each result tells you something. Sometimes it confirms your theory. Sometimes it forces you to rethink everything. An effective debugging agent refines its strategy based on what each experiment reveals.

The refinement happens at multiple levels. Tactically, the agent adjusts its immediate next steps. Strategically, it reconsiders its overall approach. Sometimes it realizes the entire problem framing was wrong and needs to step back.

This is where machine learning and reasoning intersect. The agent might use learned patterns to guide initial strategies. But it also needs explicit reasoning about whether those patterns apply to the current situation. Pure learning can be brittle. Pure logic can be inflexible. Agentic systems blend both.

Human feedback becomes crucial here. An agent that can't learn from correction will keep making the same mistakes. But one that updates too aggressively based on every comment will thrash between strategies. The right balance is task-dependent and often requires careful tuning.

Why All Three Matter

Remove any one of these requirements and you lose the “agentic” quality. A multi-step process with full observability? That’s just a complex algorithm. Partial observability without adaptation? That’s pattern matching under uncertainty. Adaptation without sustained interaction? That’s reinforcement learning on individual decisions.

The combination creates emergent behavior that feels genuinely intelligent. The system doesn’t just react, it pursues goals. It doesn’t just execute, it strategizes. When things go wrong, it figures out why and tries something different.

This is why not everything should be agentic. Simple tasks with clear paths and complete information don’t need this machinery. Adding an agent adds complexity, failure modes, and costs. Use the simplest tool that solves the problem.

But when all three requirements align, agents shine. They handle the tasks that traditionally required human judgment not because they’re magic, but because the problem structure demands exactly the capabilities agents provide.

Building for Agentic Tasks

If you’re designing systems for agentic work, these requirements have practical implications. Your architecture needs state management for sustained interaction. It needs sensors and probes for gathering information in partially observable environments. It needs reasoning components that can evaluate and switch strategies.

You also need observability into the agent itself. When an agent makes a decision, can you trace why? When it shifts strategies, can you see what triggered the change? Black box agents are hard to trust and harder to improve.

Testing gets more complex too. Unit tests aren’t enough. You need scenarios that play out over time, with realistic information hiding and strategy challenges. This means simulation environments and evaluation frameworks that go beyond accuracy metrics.

The human-agent interface becomes critical. People need to understand what the agent is doing without watching every action. They need to provide guidance without micromanaging. They need override capabilities when the agent goes off track. This is interaction design, not just UX polish.

The Real Test

Here’s how to know if a task is genuinely agentic. Ask yourself: could you write a flowchart that solves this task completely? If yes, it’s automation, not agency. Could you solve it with a single large language model call? If yes, it’s generation, not agency.

Agentic tasks resist simple decomposition. They’re messy. They require judgment. The path from start to finish isn’t straight, and you can’t see it all from the beginning. That’s exactly when you need an agent.

The three requirements I’ve outlined aren’t arbitrary. They emerge from the fundamental structure of problems that demand adaptive, goal-directed behavior over time. Get comfortable with these concepts and you’ll stop chasing agentic solutions for problems that don’t need them.

You’ll also recognize the problems that do. And those are the ones where agents don’t just add value, they transform what’s possible.