When Change Outpaces Adaptation

What Jevons Paradox Tells Us About the Age of Agentic AI

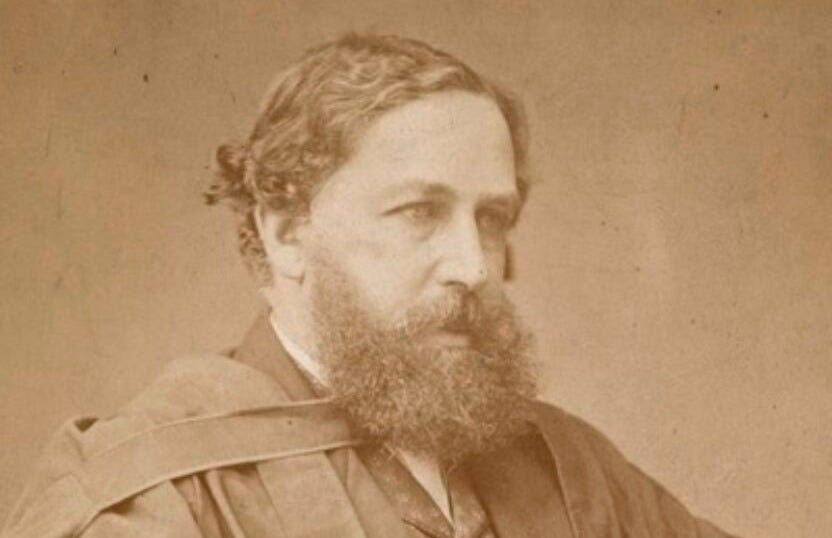

In January 2025, a Chinese AI lab called DeepSeek released a reasoning model that reportedly cost $5.6 million to train. For context, OpenAI spent somewhere north of $100 million training GPT-4. The internet declared a paradigm shift. Nvidia lost nearly $600 billion in market cap in a single day. And Microsoft CEO Satya Nadella fired off a LinkedIn post quoting a 19th-century British economist.

That economist was William Stanley Jevons. His observation, now called the Jevons Paradox, is simple and a little unsettling:

When you make a resource more efficient to use, people don’t use less of it. They use more. Much more.

Jevons watched this happen with coal in 1865. After James Watt’s improved steam engine arrived, factories didn’t burn the same amount of coal to do the same work. They burned more coal to do vastly more work. Efficiency didn’t conserve the resource. It unlocked demand that didn’t exist before.

Now replace coal with compute. And steam engines with agentic AI.

Here’s what worries me, and what should probably worry you too. When every enterprise deploys the same efficient AI models to automate the same kinds of work, the Jevons Paradox doesn’t just predict more AI usage. It predicts an explosion in AI activity, compute demand, energy consumption, and competitive pressure, all at once.

Efficiency is no longer a cost-saving measure. It’s a trigger.

From Interfaces to Labor

For most of its commercial life, AI has been an interface. You ask it something, it answers. The interaction ends. The cost per query was low enough to be a rounding error for most companies, and the value was real but bounded. Customer support bots, code suggestions, search summaries.

Agentic AI is something different. An agent doesn’t just answer a question. It takes a goal, breaks it into steps, uses tools, makes decisions, and executes. It does labor. And when the cost of that labor drops, the economics of automation change completely.

Think about what was previously too expensive to automate.

Researching 500 target accounts for a sales team.

Monitoring competitor pricing across 200 SKUs every hour.

Running personalized outreach sequences that actually adapt to responses.

Drafting compliance documentation for every product variant.

These tasks weren’t automated because a human doing them costs real money, but not so much that anyone was going to build a custom software system for it. They lived in the gray zone between too expensive to ignore and too complex to automate cheaply.

Efficient, cheap AI agents dissolve that gray zone. When the cost of an agent running a complex, multi-step workflow drops by an order of magnitude, the entire frontier of “economically viable automation” shifts.

Tasks that used to require a full-time hire now cost a few dollars a day. And companies don’t stop at replacing what they were already doing. They start doing things they never did before.

That’s Jevons at work. Lower cost doesn’t shrink consumption. It expands it into territory that was previously off-limits. The moment AI stops being an interface and starts being labor, the demand curve stops looking like a gentle slope and starts looking like a hockey stick.

DeepSeek’s R1 didn’t just prove that efficient models were possible. It proved they were coming. And every enterprise watching that news cycle started doing the same mental math.

The Red Queen Problem

Here’s the competitive trap that nobody is talking about loudly enough. If every company has access to the same high-efficiency AI models, then no single company gains a lasting edge from the tool itself. The tool is table stakes. What you do with it is the game.

This creates what evolutionary biologists call a Red Queen dynamic. In Lewis Carroll’s Through the Looking-Glass, the Red Queen tells Alice that in her country, “it takes all the running you can do, to keep in the same place.”

When everyone is running faster, standing still means falling behind. Competitive pressure doesn’t disappear when a technology becomes commoditized. It intensifies, because the floor just rose.

So what do companies actually do when everyone has the same tools? They race on three dimensions.

Volume

The first response is to deploy more agents faster. If your competitor’s sales team is running 10 AI-assisted outreach sequences, you run 100.

If they’re monitoring their top 50 accounts, you monitor 5,000.

Volume is the most obvious lever, and it’s the one that shows up first. It’s also the one that directly drives Jevons-style demand expansion. You’re not replacing one human’s workload.

You’re creating a workload no human could’ve carried.

Complexity

Volume alone doesn’t win for long. So the second move is to build more complex, proprietary systems on top of the same efficient models. A generic agent is easy to copy. An agent that’s deeply integrated with your proprietary data, your business rules, your customer history, and your operational workflows is not.

Complexity is how you make cheap infrastructure into a real moat.

Complexity, however, is becoming less expensive to build but remains expensive to run. More elaborate agent systems require more compute, more monitoring, more iteration. The efficiency gains at the model layer get eaten up by the complexity added at the system layer.

This is the paradox in microcosm. Cheaper inference leads to more ambitious deployments, which leads to higher total spending.

Constant Iteration

The third lever is speed of experimentation. When the cost to run an agent workflow drops, so does the cost to fail. Companies start running thousands of automated experiments that would have been cost-prohibitive six months ago.

A/B tests on outreach copy. Variant testing on pricing strategies. Parallel evaluation of different agent architectures. Low failure cost means high experiment volume, which means high compute demand.

The competitive logic is self-reinforcing. Efficiency enables scale. Scale creates pressure. Pressure demands more scale. Nobody calls a timeout.

In a Red Queen race, the winner isn’t the one who runs the farthest. It’s the one who keeps running longest without falling over.

A Bill Nobody Budgeted For

Nadella’s LinkedIn post wasn’t just economic theory. It was a signal. After DeepSeek’s release, the instinct was to say that cheaper AI meant less infrastructure investment. Nadella said the opposite. More efficient models will drive more usage, which will drive more infrastructure demand. The GPU boom doesn’t slow down. It accelerates.

The numbers are starting to bear that out.

The Math Nobody Wants to Do

According to the International Energy Agency’s 2025 report on Energy and AI, global electricity consumption from data centers is projected to roughly double, reaching around 945 TWh by 2030.

In the United States specifically, the IEA projects data center power consumption will increase by approximately 240 TWh compared to 2024 levels, a rise of about 130%.

A 2024 study published in Nature Sustainability, conducted by researchers from Oxford, Cornell, KTH Royal Institute of Technology, and others, projected that AI servers in the U.S. alone could consume between 220 and 532 terawatt-hours annually by 2030, potentially reaching up to 10% of the nation’s current electricity usage.

On the higher end of projections, the National Center for Energy Analytics estimates that combined data center and broader digital infrastructure demands could approach 15 to 20% of overall U.S. electricity demand in the early 2030s.

Here’s what makes this particularly hard to solve. Each individual AI inference operation is getting more efficient. But the total number of inferences is growing so fast that per-unit efficiency gains can’t keep pace. When you make each “thought” cheaper, people schedule a trillion more of them.

Demand Follows Efficiency Down

The infrastructure industry is already feeling this. Vertiv, one of the major data center equipment suppliers, reported a 29% revenue increase and 60% order growth in Q3 2025, with a backlog of $9.5 billion, according to Data Center Dynamics. That’s not the profile of a market slowing down after an efficiency breakthrough. That’s a market that believes the workload is coming.

Some infrastructure analysts have observed a rough pattern. For every significant reduction in inference costs, deployment requests tend to increase by multiples, not percentages.

The exact multiplier varies by market and model generation, but the direction is consistent. Cheaper compute doesn’t reduce compute demand. It changes what compute demand is applied to.

Meta raised its 2025 AI infrastructure spending to $60-65 billion after DeepSeek’s release, not despite it. That’s the Jevons Paradox in a budget line item.

The Debate That Matters

The energy question is important. But the question most people actually care about is the job question. And on this one, Jevons gives us less certainty.

The optimistic case draws from the pattern. When printing became cheap, more writers were employed, not fewer. When photography became affordable, more photographers existed, not fewer. When spreadsheets replaced manual calculation, more financial analysts were hired.

In each case, cheaper access to a tool expanded the total market for the skill, rather than eliminating it. Applied to AI: cheaper agents should expand demand for the humans who design, manage, and improve those agents.

Digital marketing is a reasonable example. AI has made content creation, ad targeting, and campaign analytics dramatically cheaper. The field has grown, not contracted.

More companies now do digital marketing than before, more ambitiously than before, because the cost to enter and scale dropped. The humans got more productive, not more unemployed.

But there’s a counterargument that’s harder to dismiss than the optimists like to admit. The historical cases involved tools that augmented human skills. A camera still required a photographer. A spreadsheet still required an analyst. The tool extended what the human could do.

Agentic AI is different in a specific way. It can substitute for human cognition, not just extend it. An agent can reason, decide, communicate, and iterate without a human in the loop.

If AI is a substitute for thinking, not just a tool for thinkers, then the Jevons rebound in employment may not apply. You might get more tasks done, without more humans doing them.

The honest answer is that we don’t know yet. The historical analogies are instructive but imperfect. The people who are most confident in either direction are probably the most wrong.

What we can say is that the transition period, the years between “agents are novel” and “agents are infrastructure,” will be genuinely disruptive regardless of where it lands. The people who will fare best are the ones who understand what the agents can’t do yet, and who build that as their competitive advantage.

What This Means for Enterprise Strategy

If you take Jevons seriously, a few things follow.

First, the efficiency gains from better models are real and genuinely useful. But they don’t reduce your total AI spend. They redirect it. You’ll spend the savings on volume, on complexity, on experiments you couldn’t run before.

Budget accordingly. The CFO who thinks AI efficiency means a smaller AI line item is going to be surprised.

Second, the competitive advantage isn’t the model. It’s what you build on top of the model. The race to differentiate will happen at the data layer, the integration layer, and the workflow design layer.

Proprietary data, tight operational integration, and genuine domain expertise are your moat. The underlying model is just coal.

Third, governance matters more than it did. When AI moves from answering questions to executing decisions at scale, the failure modes get bigger. A bad answer to a question is a minor annoyance. A bad agent running thousands of customer interactions or making thousands of pricing decisions before anyone notices is a different category of problem. Speed of iteration has to be matched by speed of oversight.

Fourth, energy and infrastructure are real cost inputs now. The bill for all this AI activity is going to show up somewhere. For companies running large agentic deployments, compute costs are becoming a significant operational expense.

The teams that treat this as an engineering and procurement problem, rather than just a technology problem, will have a real cost advantage.

Jevons was writing about coal in 1865, but his insight holds for any resource where efficiency unlocks demand that was previously constrained by cost. He wasn’t being pessimistic. He was just being accurate.

The question isn’t whether AI adoption will slow down as models become more efficient. It won’t.

The question is whether you’re building for the scale that comes next, or assuming that efficiency means things get simpler. They don’t.

They get faster.

Sources

International Energy Agency (IEA). “Energy and AI.” April 2025. https://www.iea.org/reports/energy-and-ai

Nature Sustainability / Environmental Change Institute, University of Oxford. “AI Could Consume 10% of U.S. Electricity by 2030.” 2024. https://www.eci.ox.ac.uk/news/ai-could-consume-10-us-electricity-2030-researchers-outline-path-sustainable-growth

National Center for Energy Analytics. “The Rise of AI: A Reality Check on Energy and Economic Impacts.” November 2025. https://energyanalytics.org/the-rise-of-ai-a-reality-check-on-energy-and-economic-impacts/

Nadella, Satya. LinkedIn / X post. January 27, 2025.

Fortune. “What is Jevons paradox? The reason Satya Nadella says DeepSeek’s new AI is good news for tech.” January 27, 2025. https://fortune.com/2025/01/27/microsoft-ceo-satya-nadella-deepseek-optimism-jevons-paradox/

NPR Planet Money. “Why the AI World is Suddenly Obsessed with Jevons Paradox.” February 4, 2025. https://www.npr.org/sections/planet-money/2025/02/04/g-s1-46018/ai-deepseek-economics-jevons-paradox

S&P Global. “Global Data Center Power Demand to Double by 2030 on AI Surge: IEA.” April 10, 2025.

Data Center Dynamics. “Vertiv Revenue Jumps 29% on Booming Data Center Demand.” October 2025.